- Blog

#Azure

How to get started with Azure Platform? We got you covered!

- 08/10/2024

Reading time 2 minutes

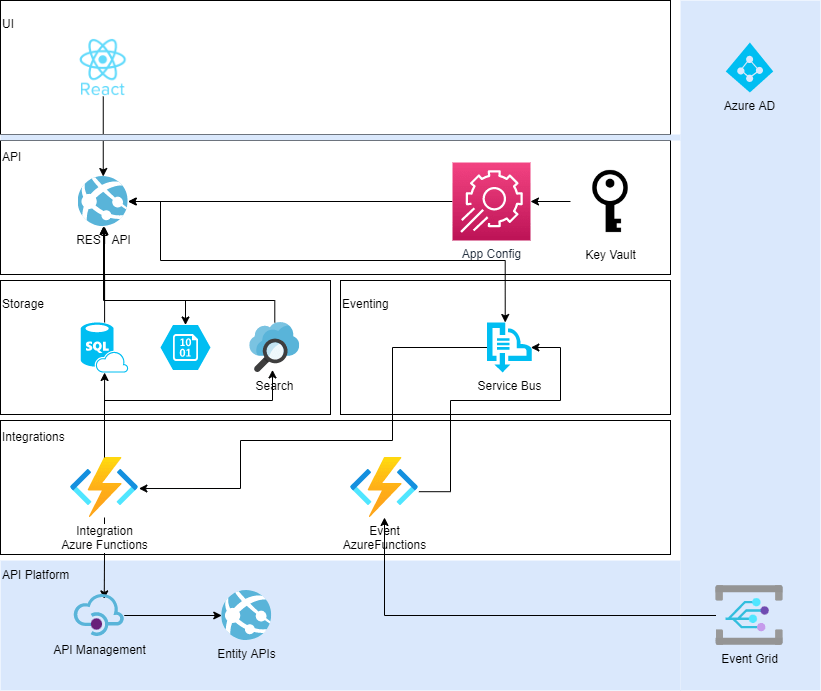

The latest project we completed was implemented as event driven architecture (EDA) with Azure Functions. There was shared SQL database for storage, and Azure Functions were updating data into it. We also implemented APIs over SQL data with Azure App Service, and published APIs into Azure API Management.

Data integrations from customer’s API Platform (also implemented by Zure :)) were implemented as number of Azure Functions triggered by either Azure Service Bus Queues or Azure Event Grid events. Integration was initiated by receiving event from API Platform as Azure Event Grid event.

Most of Azure Functions had similar business logic

Error handling was implemented with throwing exceptions. Exceptions are expensive, but then again if something fails, it’s exceptional case. The failure cases were either data not being in format that was defined, or one or related services were temporary unavailable.

We were also considering using Outbox pattern but decided that it was not needed after all. Instead, if any of the steps involved in import failed during processing single event, exception is thrown, and event is put back to Service Bus queue. There is retry policy so that event is tried to be processed couple of times. If retries fail, event is moved to Service Bus dead letter queue. It can be manually moved back to main queue, and retried. If it still ends up to dead letter queue, developer can debug it by copying event data from dead letter queue.

We don’t have any transactional data; we always request latest version of entity data from API Platform. It doesn’t matter in which order events are processed from queues.

All event processing is done with idempotence in mind, it does not matter how many times single event is processed, the end result is always the same. There is no transactional data.

Azure Functions are run in parallel, and to make sure single entity would not be updated from multiple Azure Functions, sessions in Azure Service Bus were used. Sessions make sure only single instance of Azure Function is processing event with same session key at once.

For both Azure Functions and Azure App Service MediatR was used to implement commands and queries. We didn’t end up using different database for reads and writes, so technically it’s not CQRS.

Solution was tested with unit tests testing mainly MediatR handlers, and integration tests that executed single feature by triggering Azure Functions or calling API endpoints with real SQL database.

All Azure Functions run under single App Service plan. We also have Azure App Service providing APIs for data. Both Azure Functions and API App Service are using the same SQL database and are deployed at the same time. Database migrations are also done from CI/CD pipeline.

It was really easy to debug single Azure Function. All you needed to do is to disable Azure Function in question in Azure, and then run it in your local dev box. All other Azure Functions can still run in Azure, and process events at the same time. We were using Azure Service Bus in dev environment since there is no emulator for it. That’s why Azure Function needs to be disabled in Azure while debugging in local environment.

Event Driven architecture implemented with Azure Functions is really really really great! You can divide your business logic into small pieces, and then develop, debug, test and document only small piece of business logic at once. And with Azure Functions and number of triggers they provide, you can concentrate one business logic, not the plumbing.

Our newsletters contain stuff our crew is interested in: the articles we read, Azure news, Zure job opportunities, and so forth.

Please let us know what kind of content you are most interested about. Thank you!